Displaying items by tag: database

Pitney Bowes Postage Payment System Modernization HP Tandem COBOL to C# on AWS

The Pitney Bowes Postage Payment Application had been running COBOL for decades on an HP NonStop Tandem mainframe, however, to seize the opportunities of the digital cloud age and to reduce overall technical debt, Pitney Bowes needed to modernize the Tandem COBOL to C# .NET Core. Just as important as the code, the HP NonStop Tandem database needed to also be migrated to a modern Microsoft SQL Server database and deployed to AWS. TSRI successfully transformed the application at 99.96% automation, and deployed the modernized application on the AWS cloud.

|

Customer: Pitney Bowes Inc. Source & Target Language: COBOL to C# .Net Core on AWS Lines of Code: 390,000 Duration: 6 Months Services: Automated Code Transformation (99.96% level of automation), Automated Refactoring, Database Conversion: File based system to a Microsoft SQL Environment, Integration and Testing Support, Transformation Blueprint®, Application "As-Is" Blueprint®,

|

- sql

- transformation blueprint

- Platform Migration

- modernization

- Software Code Modernization

- Refactoring

- Code Documentation

- modernize

- migration

- Data

- Data Migration

- assessment

- architecture

- multitier

- Microservices

- Micro Services

- monolithic

- Security Refactoring

- application modernization

- System Modernization

- Object Oriented

- Quality Output

- Asis Blueprint

- Software Modernization

- Modern Architecture

- ArchitectureDriven

- Software Transformation

- transformation

- cloudnative

- containerized

- modularized

- State Contract

- RFI

- RFP

- cobol

- COBOL to C#

- VAX COBOL

- RMS flat file

- database

- Open VMS RMS Flat Files

- to SQL

- to SQL Server environment

- Architecture Driven

- Cloud based

- MS SQL Server

- Microsoft SQL

- COBOL to C# NET Core

- NET Core

- AWS Cloud

- HP NonStop Tandem Mainframe

- mainframe modernization

- Mainframe Migration

- HP Tandem

- HP Nonstop

- Postal System Migration

- Postal System Modernization

- Cost Savings

- Mainframe cost saving

- Cost of ownership

- From Monolithic to multitier

- distributed architecture

COBOL to C# - State of Washington OSPI

The State of Washington’s Office of the Superintendent of Public Instruction (OSPI) awarded a sole-source contract to TSRI for modernization of the State’s Apportionment System.

|

Customer: The State of Washington OSPI Source & Target Language: COBOL to C#/.Net Lines of Code: 204,176 Duration: 5 Months Services: Automated Code Transformation, Automated Refactoring, Database Conversion: Open VMS RMS Flat Files to a Microsoft SQL Environment, Integration and Testing Support, Transformation Blueprint®, Application "As-Is" Blueprint®,

|

- sql

- transformation blueprint

- Platform Migration

- modernization

- Software Code Modernization

- Refactoring

- Code Documentation

- modernize

- migration

- Data

- Data Migration

- assessment

- architecture

- multitier

- Microservices

- Micro Services

- monolithic

- Security Refactoring

- application modernization

- System Modernization

- Object Oriented

- Quality Output

- Asis Blueprint

- Software Modernization

- Modern Architecture

- ArchitectureDriven

- Software Transformation

- transformation

- cloudnative

- containerized

- modularized

- State Contract

- RFI

- RFP

- VAX/COBOL

- cobol

- COBOL to C#

- COBOL to NET

- VAX COBOL

- RMS flat file

- database

- Open VMS RMS Flat Files

- to SQL

- to SQL Server environment

- Architecture Driven

- Cloud based

- MS SQL Server

- Microsoft SQL

Automated Refactoring: The Critical Component to Achieving a Successful Modernization

Using automation to modernize mainframe applications will bring a codebase to today’s common coding standards and architectures. But in many cases, modernization to an application’s functional equivalent isn’t always enough. Organizations can do more to make their modern code more efficient and readable. By building refactoring phases into their modernization projects, organizations can eliminate the Pandora’s box of dead or non-functional code that many developers don’t want to open, especially if it contains elements that just don’t work.

Using TSRI’s automated refactoring engine, remediation was complete in an hour.

What is Refactoring and How is it Used?

Refactoring, by definition, is an iterative process that automatically identifies and remediates pattern-based issues throughout a modernized application’s codebase. For example, unreferenced variables or unnecessary redundant snippets could exist throughout the application. This scan, known as dead/redundant code refactoring, will find repetitions of any of this unusable code to flag, then remove it from the codebase. One of TSRI’s current projects found 25,000 instances of a similar issue that would have required 15 minutes of manual remediation per instance—not including the inevitable introduction of human error that would require further remediation. The number of development hours would take more than a year for a single developer to complete.

Using TSRI’s automated refactoring engine, however, remediation was complete in an hour.

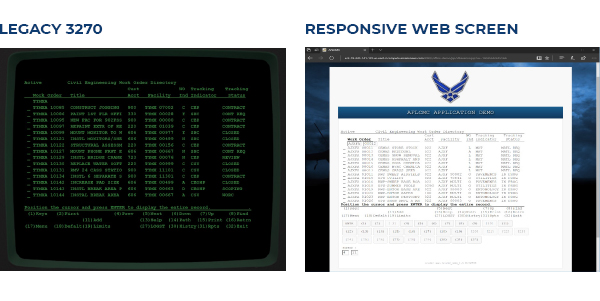

Calling refactoring its own post-modernization phase is, in some ways, misleading. Refactoring typically occurs all the way through an automated mainframe transformation. As an example, in a typical COBOL or PL/1 mainframe modernization, TSRI would refactor the code from a monolithic application to a multi-tier application, with Java or C# handling back-end logic, a relational database layer through a Database Access Object (DAO) layer, and the user interface (screens) modernized in a web-based format. Believe it or not, many legacy applications still run on 3270 green-screens or other terminals, like in the graphic below.

Once the automated modernization of the legacy application is complete, the application has become a functionally equivalent, like-for-like system. However, any deprecated code, functions that may have never worked as planned, or routines that were written but never implemented will still exist. A process written in perhaps 1981—or even 1961—may have taken far more code to execute than a simple microservice could handle today.

Situations like this are where refactoring becomes indispensable.

Where to begin?

Before a formal refactoring process can begin, it’s important to understand your goals and objectives, such as performance, quality, cybersecurity, and maintainability. This will typically mean multiple workshops to define which areas of the modernized codebase need attention and the best candidates for refactoring, based upon the defined goals. These refactorings will either be semi-automated (fully automated with some human input) or custom written (based upon feedback from code scanners or subject-matter experts.)

The refactoring workshops can reveal many different candidates for refactoring:

- Maintainability: By removing or remediating bugs, dead or orphaned code, or any other anomalies the codebase can be reduced by as much as one third while pointing developers in the direction of any bugs in need of remediation.

- Readability: Renaming obscure functions or variables for a modern developer to fit within naming conventions that are both understandable and within the context of the code’s functionality.

- Security: Third-party tools such as Fortify and CAST can be utilized to find vulnerabilities, but once found they need to be remediated through creation of refactoring rules.

- Performance: Adding reusable microservices or RESTful endpoints to connect to other applications in the cloud can greatly improve the efficiency of the application, as can functionality that enables multiple services to run in parallel rather than sequentially.

What are the Challenges?

- Challenge 1: One reason refactoring must be an iterative process is that some functionality can change with each pass. Occasionally, those changes will introduce bugs to the application. However, each automated iteration will go though regression testing, then refactored again to remediate those bugs prior to the application returning to a production environment.

- Challenge 2: The legacy architecture itself may pose challenges. On a mainframe, if a COBOL application needs to access data, it will call on the entire database and cycle through until it finds the records it needs. Within a mainframe architecture this can be done quickly. But if a cloud-based application needs to call a single data record out of millions or billions from halfway across the world (on cloud servers), the round trip of checking each record becomes far less efficient—and, in turn, slower. By refactoring the database, the calls can go directly to the relevant records and ignore everything else that exists in the database.

- Challenge 3: Not every modernization and refactoring exercise meets an organization’s quality requirements. For example, the codebase for a platform that runs military defense systems is not just complex, it’s mission critical. Armed forces will set a minimum quality standard that any transformation must meet. Oftentimes these standards can only be achieved through refactoring. A third-party tool like SonarQube in conjunction with an automated toolset like TSRI’s JANUS Studio® can be utilized to discover and point to solutions for refactoring to reach and exceed the required quality gate.

In conclusion, while an automated modernization will quickly and accurately transform legacy mainframe applications to a modern, functionally equivalent, cloud-based or hybrid architecture, refactoring will make the application durable and reliable into the future.

--

TSRI is Here for You

As a leading provider of software modernization services, TSRI enables technology readiness for the cloud and other modern architecture environments. We bring software applications into the future quickly, accurately, and efficiently with low risk and minimal business disruption, accomplishing in months what would otherwise take years.

See Case Studies

Learn About Our Technology

Get started on your modernization journey today!

New Book by Ulrich and Newcomb

|

"New Book by Ulrich and Newcomb: Information Systems Transformation: Architecture-Driven Modernization Case Studies with |

Kirkland, WA. (Feburary 22, 2010) – Book Release Information Systems Transformation: Architecture-Driven Modernization Case Studies Information Systems Transformation: Architecture-Driven Modernization Case StudiesBy William M. Ulrich and Philip H. Newcomb Published by Morgan Kaufmann ISBN: 978-0-12-374913-0 Copyright Feb 2010 $59.95 USD €43.95 EUR £29.99 GBP www.informationsystemstransformation.com |

| What The Experts Are Saying: According to Grady Booch, IBM Fellow & Chief Scientist, Software Engineering: "Ulrich and Newcomb's book offers a comprehensive examination of the challenges of growing software-intensive systems … (Read more...) According to Ed Yourdon, noted Author and Consultant: "Modernization is going to be a more and more important part of the overall IT strategy. William Ulrich and Philip Newcomb's important new book ... (Read more...) According to Richard Soley Ph.D. Chairman/CEO, Object Management Group (OMG): “Estimates by internationally-known researchers of the worldwide legacy code base is now approaching a half-trillion lines. That only counts so-called "legacy languages" like COBOL--which drive the world. Add in database schemas … (Read more...) About the Book Information Systems Transformation: Architecture-Driven Modernization Case Studies, a new book by William Ulrich and Philip Newcomb, provides a practical guide to organizations seeking ways to understand and modernize existing systems as part of their information management strategies. It includes an introduction to ADM disciplines and standards, including alignment with business architecture, as well as a series of scenarios outlining how ADM is applied to various initiatives. Ten chapters, containing in-depth, modernization case studies, distill the theory and delineate principles, processes, and best practices for every industry, ensuring the book's leading position as a reference text for all of those organizations relying on complex software systems to maintain their economic, competitive and operational viability. (Read more...) Key Features

|

William M. Ulrich is President of Tactical Strategy Group, Inc. (TSGI) and a management consultant. Mr. Ulrich has been in the modernization field since 1980 and continues to serve as a strategic advisor on business and IT transformation projects for corporations and government agencies. In 2005, Mr. Ulrich was awarded the Keeping America Strong Award for his work in information systems modernization. He is Co-Chair of the OMG Architecture-Driven Modernization Task Force and the OMG Business Architecture Special Interest Group, Editorial Director of the Business Architecture Institute, and author of Legacy Systems: Transformation Strategies. and a management consultant. Mr. Ulrich has been in the modernization field since 1980 and continues to serve as a strategic advisor on business and IT transformation projects for corporations and government agencies. In 2005, Mr. Ulrich was awarded the Keeping America Strong Award for his work in information systems modernization. He is Co-Chair of the OMG Architecture-Driven Modernization Task Force and the OMG Business Architecture Special Interest Group, Editorial Director of the Business Architecture Institute, and author of Legacy Systems: Transformation Strategies. |

Philip H. Newcomb is Founder and CEO of The Software Revolution, Incorporated (TSRI) and creator of TSRI's acclaimed architecture-driven modernization services and toolset JANUS Studio®. He is coauthor of Reverse Engineering (Kluwer 1996) with Linda Wills, Coeditor of the 2nd Working Conference on Reverse Engineering (IEEE 1995) with Elliot Chikofsky and principal author of the Abstract Syntax Tree Metamodeling Specification (OMG Specification 2009). With more than 35 publications and 70 successfully completed information system modernization projects he is a recognized leader in the application of artificial intelligence, automatic programming and formal methods to industrial-scale software modernization. and creator of TSRI's acclaimed architecture-driven modernization services and toolset JANUS Studio®. He is coauthor of Reverse Engineering (Kluwer 1996) with Linda Wills, Coeditor of the 2nd Working Conference on Reverse Engineering (IEEE 1995) with Elliot Chikofsky and principal author of the Abstract Syntax Tree Metamodeling Specification (OMG Specification 2009). With more than 35 publications and 70 successfully completed information system modernization projects he is a recognized leader in the application of artificial intelligence, automatic programming and formal methods to industrial-scale software modernization. |

| About Morgan Kaufmann: Since 1984, Morgan Kaufmann has published premier content on information technology, computer architecture, data management, computer networking, computer systems, human computer interaction, computer graphics, multimedia information and systems, artificial intelligence, computer security, and software engineering. Our audience includes the research and development communities, information technology (IS/IT) managers, and students in professional degree programs. Learn more at www.mkp.com. Contact Bob Dodd, 781-313-4726 or This email address is being protected from spambots. You need JavaScript enabled to view it., for an electronic review copy, access to our expert authors, or to publish excerpts of our material. For more information about TSRI contact: TSRI Greg Tadlock Vice President of Sales Phone: (425) 284-2770 Fax: (425) 284-2785 This email address is being protected from spambots. You need JavaScript enabled to view it. |

- 2010

- 2770

- 284

- 2842770

- 2847

- 2nd

- 313

- 374913

- 425

- 425284

- 4726

- 781

- 978

- about

- abstract

- acclaimed

- according

- acts

- add

- adm

- advisor

- agencies

- alignment

- allowing

- america

- application

- applied

- apply

- approaching

- architecture

- artificial

- audience

- author

- authors

- automatic

- award

- awarded

- base

- been

- bob

- booch

- book

- Business

- called

- case

- ceo

- chair

- chairman

- challenges

- chapters

- chief

- chikofsky

- coauthor

- cobol

- coeditor

- com

- common

- communities

- competitive

- complete

- completed

- complex

- comprehensive

- concepts

- conference

- consultant

- contact

- containing

- content

- continues

- copy

- copyright

- core

- corporations

- countries

- counts

- covering

- creator

- database

- degree

- delineate

- depth

- development

- different

- director

- disciplines

- distill

- dodd

- Drive

- economic

- editorial

- electronic

- elliot

- elsevier

- engineering

- ensuring

- eur

- every

- examination

- examples

- excerpts

- expert

- experts

- feb

- feburary

- fellow

- field

- fletcher

- force

- formal

- founder

- four

- gbp

- getting

- going

- government

- grady

- graphics

- greg

- group

- growing

- guide

- half

- has

- his

- how

- human

- ibm

- ieee

- illustrated

- immediately

- implementing

- important

- includes

- including

- incorporated

- industrial

- industries

- industry

- information

- informationsystemstransformation

- initiatives

- institute

- intelligence

- intensive

- interaction

- interest

- internationally

- introduction

- isbn

- janus

- kaufmann

- keeping

- key

- kirkland

- kluwer

- known

- languages

- Leader

- leading

- learn

- legacy

- life

- like

- linda

- lines

- maintain

- management

- managers

- marketing

- material

- metamodeling

- mkp

- models

- modernization

- modernize

- more

- morgan

- multimedia

- multiple

- networking

- newcomb

- noted

- offers

- omg

- one

- only

- operational

- organizations

- outlining

- overall

- part

- philip

- platforms

- position

- practical

- practices

- premier

- president

- principal

- principles

- processes

- professional

- programming

- programs

- projects

- publications

- publish

- published

- real

- recognized

- reference

- release

- relying

- research

- researchers

- results

- reverse

- review

- reviews

- revolution

- richard

- sales

- salesteam

- saying

- scale

- scenarios

- schemas

- scientist

- security

- see

- seeking

- series

- serve

- services

- shopping

- since

- site

- softwarerevolution

- soley

- solutions

- special

- specification

- standards

- started

- stop

- strategic

- strategies

- strategy

- strong

- students

- studies

- successfully

- system

- tactical

- tadlock

- task

- technology

- ten

- tested

- text

- than

- their

- theory

- those

- tim

- timothy

- toolset

- transformation

- trillion

- trsi

- tsgi

- tsri

- ulrich

- understand

- usd

- various

- viability

- vice

- visit

- was

- Ways

- web

- well

- what

- which

- william

- wills

- work

- working

- world

- worldwide

- www

- yourdon

COBOL to C++ - STG Inc. - WSMIS-MICAP

As part of the Logistics Management System, the Weapon System Management Information System (WSMIS) is responsible for tracking combat capability and impending parts problems. The system was well regarded during Desert Storm for its ability to expedite repair or procurement of critical items. However this legacy COBOL system requires modernization to continue to fulfill its mission.

|

Customer: STG Inc. Source & Target Language: COBOL to C++ Lines of Code: 39,654 Duration: 4 months Services: Code Transformation, Automated Refactoring, Database Transformation, Testing and Implementation Support, Transformation Blueprint®

|

- C++

- COBOL to C++

- Code Transformation

- automated refactoring

- Database Transformation

- Testing and Implementation

- transformation blueprint

- Software code

- modernization

- migration

- US Air Force

- military

- cobol

- jcl

- batch

- Oracle 9i Database

- oracle

- database

- Windows Based

- Flat Files

- mainframe

- architecture

- amdahl

COBOL to C++ - U.S. Air Force / WSCRS I & II

The U.S. Air Force's Weapons System Cost Retrieval System (WSCRS), designation H036C, was written in COBOL running on an Amdahl-5890 platform and using a flat file data base. The system required modernization by the Wright-Patterson Mission System Group (MSG) to improve base support for the Air Force weapon financial systems. TSRI transformed 100% of the WSCRS COBOL code into C++ and facilitated an error-free delivery to the customer several weeks ahead of schedule.

|

Customer: US Air Force Source & Target Language: Cobol to C++ Lines of Code: 627,500 Duration: 5 months Services: Code Transformation, Automated Refactoring, Installation and Testing Support, Remote Support for Customer Acceptance, Transformation Blueprint®

|

- cobol

- C++

- transformation blueprint

- Code Transformation

- Automatic Refactoring

- US Air Force

- COBOL to C++

- amdahl

- mainframe

- Flat File

- database

- Database Transformation

- usaf

- data manipulation

- modernization

- migration

- Software Code Modernization

- transformation

- Legacy Software code

- COBOL Transformation

- COBOL Conversion

- IT Budget

- dyncorp

- Windows OS

- Windows Operating System

- Mainframe code conversion

- Mainframe to C++

- Functional Equivalent System